The $96 Million Blind Spot: Why the AEO Category Is Measuring the Wrong Turn

Profound published a 3,000 word comparison of itself against AthenaHQ this week. It is the wrong comparison. Here is the gap both platforms share.

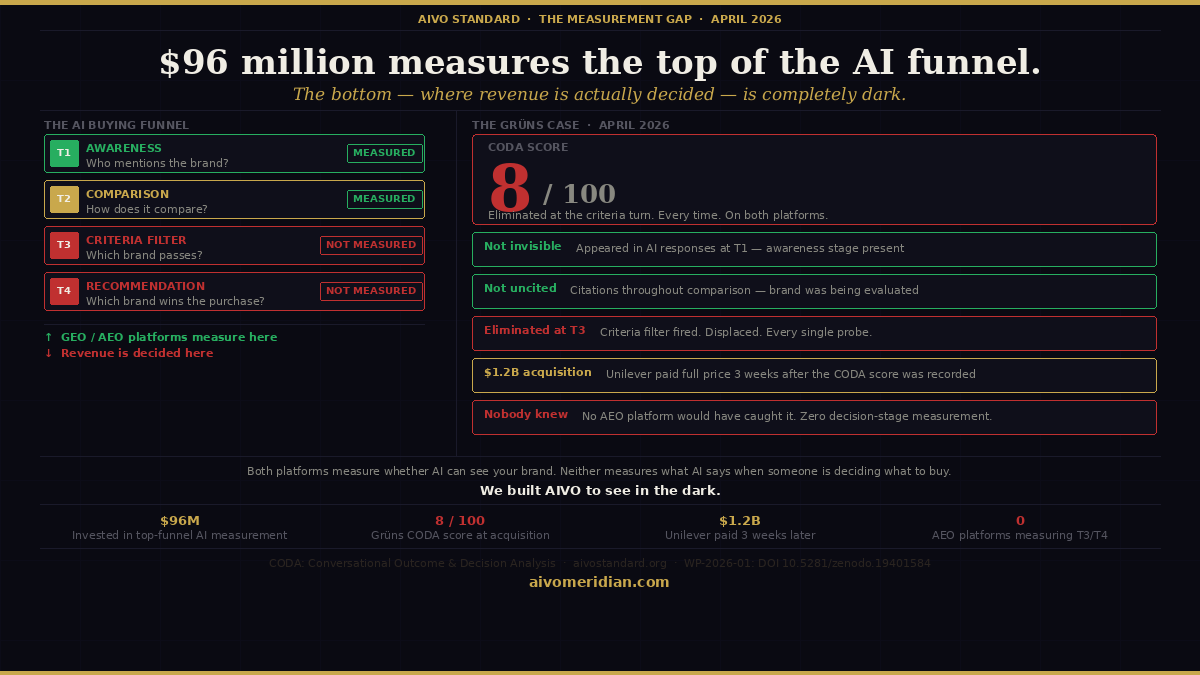

There is a turn in every AI buying conversation that the entire GEO and AEO category has collectively decided not to measure.

It happens when the AI platform stops listing options and starts eliminating them. A buyer has asked their initial question. The model has surfaced the consideration set. The buyer has asked a follow-up — comparing options, narrowing criteria, applying the specific framework that will produce a final recommendation. At this turn, the model evaluates the brands in the conversation against a criteria filter. Some brands satisfy it. Most do not. The ones that do not are eliminated — not criticised, not ranked lower, simply no longer present when the buyer receives the final recommendation.

This is Turn 3 of a structured buying sequence. And $96 million has been invested in measuring Turn 1.

What Profound and AthenaHQ actually measure

Profound is the category leader in GEO and AEO measurement, having raised $96 million in venture capital and serving more than 700 enterprise clients including 10% of the Fortune 500. AthenaHQ is the most technically sophisticated challenger. This week Profound published a detailed comparison of its platform against AthenaHQ.

The comparison is interesting. But it is the wrong competitive frame. Both platforms measure whether AI can see your brand — first-prompt citation frequency, share of AI voice, brand mention rate across major platforms. These are real and measurable signals. They are not the signal connected to the commercial outcome brands are purchasing when they invest in AI visibility improvement.

The commercial outcome — AI purchase recommendation performance — is determined at Turn 3 and Turn 4 of the buying sequence. Neither Profound nor AthenaHQ measures it. Not because they have chosen not to — but because measuring it requires a fundamentally different methodology: structured four-turn buying sequences that simulate the complete purchasing conversation and classify brand state at every turn.

The Grüns finding

In April 2026, AIVO measured Grüns — a supplement brand that had just announced a $1.2 billion acquisition by Unilever — using the CODA four-turn buying sequence protocol across ChatGPT, Perplexity, Gemini, and Grok.

The CODA score was 8 out of 100.

Grüns was not invisible. The brand appeared in AI responses at Turn 1 — the awareness stage where GEO measurement operates. It was not uncited. Citations were present throughout the comparison stage. But at Turn 3 — the criteria evaluation stage — the model applied a filter that Grüns could not satisfy. The brand was displaced. Every single probe. Across both ChatGPT and Perplexity.

Three weeks after the CODA score was recorded, Unilever completed a $1.2 billion acquisition of the brand.

Nobody at Grüns knew. No AEO platform would have detected it. The brand was being systematically eliminated at the AI purchase decision stage at the exact moment Unilever was validating its commercial value against every other metric available.

Why the category cannot measure this

The GEO and AEO category measures citation frequency because citation frequency is measurable, improvable on a short timeline, and reportable in dashboard form. Decision-stage recommendation outcomes require a structured buying sequence probe methodology — running the complete purchasing conversation, classifying brand state at every turn, recording the verbatim criteria the model applied at Turn 3, and calculating the Revenue at Risk from the displacement finding. This is a different measurement architecture from citation counting. It is not a feature that can be added to a monitoring platform — it is a different product.

The invisible metric is therefore also the unmeasured and unaddressed metric. The GEO category is optimising for the metric it can improve. The question of whether improving that metric improves the commercial outcome — AI purchase recommendation performance — is not asked because it cannot be answered by the measurement infrastructure the category has built.

The broader finding

Last week AIVO published a peer-archived working paper documenting this gap across five named enterprise brands: Chanel N°5, DocuSign, Akamai Technologies, Clarins, and TUI. All five with Meridian probe data. Two are named clients of the leading GEO platforms. The paper introduces the term layer mismatch to describe the structural gap between the evidence layer GEO platforms optimise and the evidence layer that determines AI purchase recommendation outcomes.

The paper documents three conflict mechanisms through which GEO content can actively worsen decision-stage performance — entity definition conflict, claim consistency conflict, and temporal weighting conflict — and explains why the GEO category cannot address these mechanisms regardless of platform investment or product development.

Chanel N°5 — 103 years of cultural recognition, in continuous production since 1921 — is absent from Gemini's spontaneous consideration set for luxury perfume because its knowledge graph entity anchor is not correctly structured for the fragrance category. Profound's content agents cannot detect this. AthenaHQ's optimisation tools cannot fix it. The problem is at a layer neither platform can reach.

The measurement question that follows

If you are investing in GEO or AEO platforms, the question worth asking is not whether your brand is appearing in AI responses — it is whether it is winning the AI purchase recommendation when a buyer applies criteria and asks for a final decision. Those are different questions with different answers and different measurement requirements.

The layer mismatch between where the GEO category invests and where commercial outcomes are determined is the blind spot that $96 million has not yet illuminated.

We built AIVO to see in the dark.

WP-2026-08: The Layer Mismatch is open access at aivostandard.org. DOI: 10.5281/zenodo.19840293

AIVO Meridian: aivomeridian.com